Fact and Fiction: The Real Dangers of Duplicate Content in SEO

Duplicate content in SEO is often seen as the faceless enemy. It seems obvious that duplicate content is just content duplicated across the internet, but there are a lot of questions concerning it. Everyone knows it exists. Everyone knows they should fear it. But no one is quite sure what it is (or isn’t). Fear of the unknown is common, but we don’t want to stay afraid.

That’s why we want to shed some light on the myths and talk about the true dangers of duplicate content in SEO.

What’s the issue?

First, we need to examine just why duplicate content can be a bad thing in the first place. The answer is pretty simple: Search engines want to eliminate search engine manipulation.

There are plenty of black hat marketing techniques out there. These use strategies to increase SERP (search engine results page) rankings artificially. Strategies include keyword stuffing, scraping blogs, and manipulating social media. Search engines do not allow this type of marketing. But that doesn’t stop black hat marketers from employing these tactics until they are caught. Duplicate content can sometimes fall into this category.

Yes, there are some marketers who duplicate content purposefully (more on that in a bit) on different websites. However, there are plenty of marketers out there accidentally falling victim to duplicate content in SEO. There are plenty of ways this can happen. But is it as big of a negative as some websites would have you believe? Probably not. But first, let’s look at what the two biggest search engines have to say on the matter.

What about republishing content?

As mentioned earlier, sometimes we republish content purposefully. This allows our content to show up in more than one location. According to Google and Bing, this can be a bad thing as it confuses end users. Yes, there is a risk that this becomes duplicate content. However, it’s a really small risk as you’ll see in the example below.

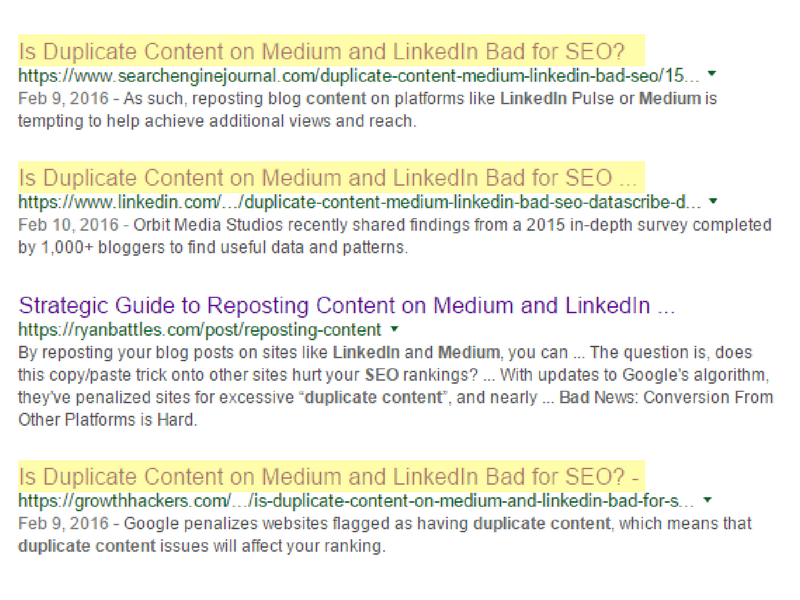

In 2016, Search Engine Journal published an article on this specific issue. One day later, they re-published it to LinkedIn. Someone even immediately wrote a cross-post to the original article, where just the first paragraph and title is used on the blog of another website that redirects to the original post.

It’s important here to notice all of these results appear when anyone googles “Is duplicate content on Medium and LinkedIn bad?” Is Google punishing them? It doesn’t seem like they have been, and these posts have been live for over a year now.

Be aware of the original

When it comes to republishing your content verbatim on other sites, the biggest issue is that the republished content can outrank the original. That’s not preferable, but as long as you’re building backlinks to your website, you can still gain valuable traffic. It is a necessity to add backlinks to your republished content. Doing this allows you to control the relevancy of what you’re linking to. You can also control the quality of the sites you’re republishing to.

Perhaps the most relevant thing to keep in mind when republishing comes from Google’s section on syndication:

If you syndicate your content on other sites, Google will always show the version we think is most appropriate for users in each given search, which may or may not be the version you’d prefer. However, it is helpful to ensure that each site on which your content is syndicated includes a link back to your original article. You can also ask those who use your syndicated material to use the noindex meta tag to prevent search engines from indexing their version of the content.

Just make sure when you do republish content that you do so at least two weeks after the original article has been published. More on promoting your content here.

So, what constitutes manipulation?

We’ve confirmed that reposting content verbatim to another website shouldn’t impact search engine results (as long as that website is relevant and reputable). So what is the manipulative content that Google and Bing are so concerned about?

Search engines are concerned about pointing site visitors to the most relevant pages based on their searches. If you have multiple pages on your website with identical or nearly identical content, the search engine is not sure where to point visitors.

This can negatively affect rankings since you have multiple pages on your own website competing against each other. It is also a form of blackhat marketing, as it seems like you are stuffing your website with keywords in order to improve your rankings. While we want to be at the top of Google search results, there is no quick or easy way to achieve this. You have to follow the rules.

Certain types of blogs may be perceived as manipulation. For example, poorly written blogs stuffed with keywords which are then duplicated across your site or other sites. The content you put out there should be well-conceived, edited, and approved.

The punishment for engaging in this type of “spammy” duplicate content is removal from search engine rankings altogether.The way you promote your content should not be conceived as malicious. Reach out on Google Search Console’s forum to confirm you’re not accidentally engaged in manipulative content promotion.

What incidental duplication should you be aware of?

You still need to address duplicate content in SEO that isn’t your fault. If there are multiple versions of a page, Google and Bing have to work harder to choose the correct page.

Here are the types of duplicate content that your website often “creates on its own” that you need to be aware of:

- Website protocol variations – Your website might be accessible using both of the protocols HTTP:// or HTTPS://. This means two versions of your website now exist. Hint: If you have both, favor HTTPS://, Google does.

- URL variations – URL variations can occur in both the beginning and the end of a URL. For instance, you might notice that sites are accessible whether or not you use “www,” but often both versions exist. Alternately, analytics codes might create multiple versions of the same URL. This usually affects the end of the URL and is notable because of an excess of equal signs.

- Third-party scrapers – There are sites out there that “scrape” websites for content in order to repost on their own blog or website. Most scraping is not harmful to the original website owner. However, these links may be deemed “low quality” or “spammy” based on Google’s definition of a “link scheme.” That type of third-party linking can negatively affect your website.

How to avoid (and fix) duplicate content

Use Google Search Console to find and fix duplicate content issues on your site that you aren’t aware of.

Once you find duplicate content, you have a few options:

- Set up 301 redirects – For intentional content duplication that you’d now like to remove, use 301 redirects. 301 redirects forward users to the page you decide should be the one-and-only.

- Disavow backlinks – If you’re the victim of a spammy scraper, you can disavow specific backlinks so your connection to the scraper is severed.

- Implement canonical attribution – The best way to fix issues with multiple URLs or protocols creating duplicate content on your site is to set up canonical links. Add a canonical link element from the duplicate page pointing to the page you decide should be the “source of truth.” Do this by adding the rel=”canonical” link element to head of the web page.

Still concerned about your website’s duplicate content issues? Visit Google and Bing’s content duplication pages for the full guidelines. And DemandZEN is always here to help.

You Might Also Enjoy These Posts

Gamification Strategies: Badges in B2B Marketing

7 Reasons Why Relevant Data is Important to Your Organization

Welcome To DemandZEN

DemandZEN specializes in Account-Based Demand Generation and solving the challenges around finding, engaging and converting target accounts into real opportunities for B2B Technology and Services companies.